Why the Musk vs. Altman Lawsuit Could Be Seen as a Show Trial

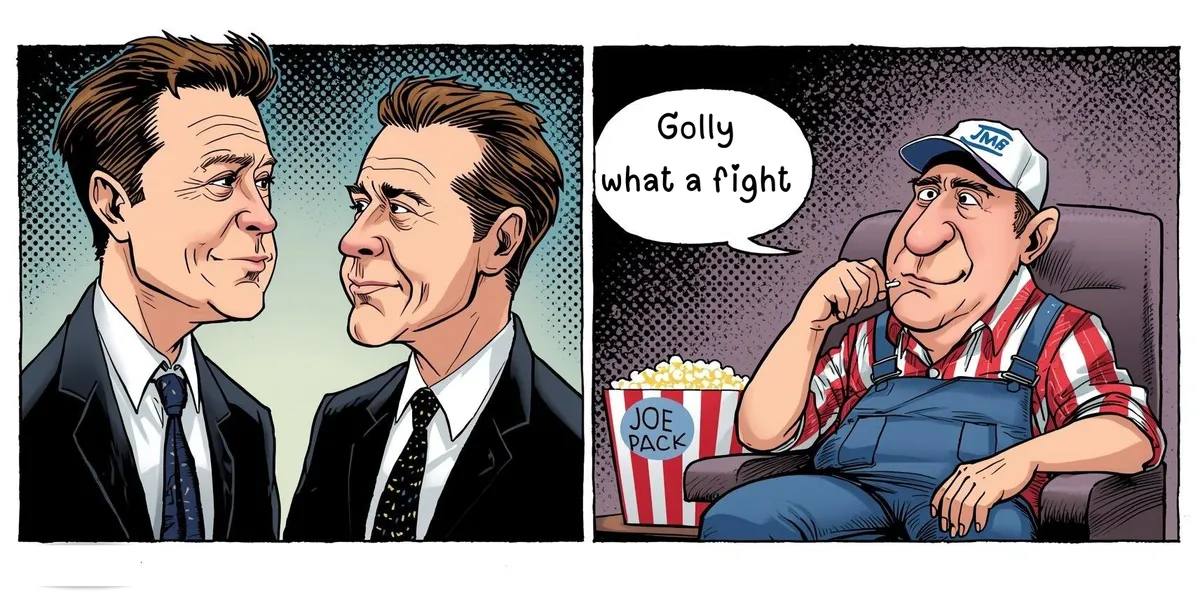

This is a trial between billionaires about the nature of public interest organizations and the public is the only party not at the table. Looking at the trial dispassionately, one can see how everyone on the docket wins and the public loses.

Jury selection in Musk v. Altman began Monday, April 28, 2026, in an Oakland federal courtroom. The spectacle has everything: warring billionaires, a half-trillion-dollar company, whispers of betrayal, and a judge who will decide whether to unwind one of the most consequential corporate restructurings in tech history. The trial is being framed as a reckoning - a moment when someone is finally holding the AI industry accountable.

But look carefully at who benefits from each possible outcome, and a different picture begins to form. One that raises a question worth asking out loud: could this, in a meaningful sense, be a show trial?

To be clear: this is an analytical question, not a legal finding. The proceedings are real, the claims are serious, and the judge is no pushover. But a show trial doesn't require a rigged court. It simply requires that the theatrical function of the proceeding serves interests beyond the stated legal question.

The public that largely underwrote this nonprofit is invited to enjoy the circus but offered no seat at the table.

How We Got Here: A Brief History of the Idea That Changed Everything

To understand what is really at stake, you need to go back to 2017, not to a courtroom, but to a Google research lab.

That year, a team of Google Brain researchers published a paper called "Attention Is All You Need." It introduced the Transformer architecture: a method of processing language not word by word, like older AI systems, but by weighing relationships across an entire passage simultaneously. It was faster, more scalable, and more powerful than anything before it.

Then Google did something that, in hindsight, looks like one of the most consequential miscalculations in corporate history: they published the research openly.

The logic made sense at the time. Google's research culture rewarded publication; it attracted talent, established credibility, and set the direction of the field. And in 2017, the bottleneck in AI wasn't the idea; it was compute, data, and engineering scale. Google had all three. The thinking was roughly: even if everyone knows the architecture, we'll execute it best.

They were wrong. Or at least, not as right as they expected to be.

OpenAI, founded in 2015 as a nonprofit dedicated to ensuring artificial intelligence benefits humanity, took the Transformer architecture and ran with it. So did Meta, Anthropic, and dozens of others. The open publication of the foundational idea democratized access to the engine while the race to build the smartest AI remained fiercely private.

The Nonprofit That Needed to Compete

OpenAI's founding premise was explicitly adversarial to concentrated AI power. The concern was real and coherent: whoever builds the most powerful AI first sets the rules on access, on pricing, on safety decisions, on geopolitical influence. The nonprofit structure was meant to keep that power diffuse, accountable, and oriented toward public benefit rather than shareholder return.

Elon Musk was an early and significant backer, contributing roughly $38 million in OpenAI's first years. Musk’s donations helped get the project off the ground and lent it credibility. He claimed and received tax deductions for those donations - meaning taxpayers, helped subsidize the early funding that would eventually become a half-trillion-dollar enterprise.

Then came the constraint problem. Training frontier AI models costs billions. A pure nonprofit cannot issue equity. It cannot offer the compensation packages needed to retain researchers being recruited by Google, Meta, and others. So in 2019, OpenAI restructured into what it called a "capped-profit" hybrid: a for-profit arm that could raise outside capital, with returns to investors limited, and a nonprofit parent nominally still in control.

Microsoft stepped in with an initial $1 billion investment that year. The partnership has since grown to somewhere north of $13 billion. Microsoft provides the cloud infrastructure, Azure, that is arguably the real fuel of the operation. Without it, OpenAI cannot train its models at scale.

The nonprofit had become, in structural terms, deeply dependent on one of the world's largest for profit technology corporations. In October 2025, OpenAI completed its conversion to a Public Benefit Corporation. A for-profit structure with a public-benefit mandate written into its charter, but fiduciary duties that now run to shareholders.

Musk's Argument and the Strategic Subtext

Elon Musk filed his lawsuit in early 2024, arguing that OpenAI's leadership had breached the founding nonprofit charter, violated charitable trust obligations, and unjustly enriched themselves at the expense of the public mission. He has sought damages exceeding $130 billion, the removal of Sam Altman and Greg Brockman from their roles, and the unwinding of the 2025 for-profit restructuring. He has stated that any damages should go to OpenAI's charitable arm and not to himself.

Notably, Musk dropped his fraud claims just before trial, narrowing the case to unjust enrichment and breach of charitable trust. This was a significant strategic move. Unjust enrichment doesn't require proving anyone lied; it requires showing that someone was enriched at another's expense through an unfair transaction. It turns on the structure of what happened, not the intent behind it.

The argument is coherent and, in places, compelling. But it sits alongside an uncomfortable fact: Elon Musk is the founder of xAI, a direct competitor to OpenAI in the frontier AI market. A ruling that undermines OpenAI's for-profit structure, complicates its planned IPO, or forces the removal of its CEO would not merely vindicate a principle. It would materially benefit Musk's own commercial interests.

The Show Trial Question

Here is the observation that the mainstream coverage of this trial has largely sidestepped: both parties could be seen as benefiting from the same underlying outcome.

If Musk wins, OpenAI's conversion is legally contested, its IPO is complicated, and the Microsoft partnership faces scrutiny. xAI, which operates as a straightforward for-profit from inception with no nonprofit pretense to maintain, faces one less well-capitalized competitor.

If Musk loses, OpenAI's for-profit conversion is legally blessed. The Microsoft partnership is validated. The pathway to a public offering is cleared. The argument that a nonprofit can raise billions from a major tech corporation, restructure into a commercial entity, and face no meaningful accountability becomes settled precedent.

In neither scenario does the public gain a structural alternative to concentrated, private control of advanced AI. In neither scenario does anyone answer the question that OpenAI's founding was supposed to resolve: can a mission-driven entity steward transformative technology without being captured by the capital it needs to operate?

The trial is real. The legal claims are serious. But the theatrical function of the proceeding, someone is holding AI accountable, may obscure the fact that the entity with the most at stake, the public, has no attorney in that courtroom.

The Tax-Subsidized Pipeline

There is a detail in this story that deserves more attention than it has received. Musk donated to OpenAI and took tax deductions for those donations. He is now, in his own legal filings, arguing that his donations should be treated more like capital contributions that entitle him, or at least the public, to a share of the resulting value.

OpenAI's own response makes the stakes plain: the company argues that Musk accepted the tax benefit of a charitable donation and cannot now seek the economic upside of an investment. That is a legally reasonable position. But it points to something larger.

The early funding of OpenAI, the seed capital for what became a half-trillion-dollar enterprise, was partially subsidized by the American taxpayer through the nonprofit tax treatment of those donations. The public, in a real sense, helped pay for the foundation. But it doesn’t look to share in the return.

The Missing Plaintiff

Both Musk and Altman are sophisticated actors with legal teams, public platforms, and financial resources that dwarf the GDP of many nations. The adversarial framing of this trial is genuine as they appear to genuinely dislike each other, and the stakes for both are real.

But the adversarial framing also serves both of them. It keeps public attention on the personality conflict rather than the structural question. It frames the proceedings as accountability in action. And it leaves unasked the question that a truly public interest proceeding might ask: not who controls OpenAI, but whether any private actor - Musk's xAI included - should hold unchecked power over technology of this consequence.

The AI industry has produced, in a remarkably short time, a small number of extremely powerful actors: OpenAI/Microsoft, Google/DeepMind, Anthropic (backed by Amazon and Google), Meta, and xAI. Each traces its foundational architecture to the same publicly disseminated research. Each is now building proprietary systems, guarding its models closely, and competing for dominance over infrastructure that will shape how billions of people access information, make decisions, and understand the world.

The Musk v. Altman trial may clarify who gets to control one of those actors. It will not answer whether the concentration itself is acceptable. Which raises the question nobody in that Oakland courtroom is asking: should Joe Six Pack, the public, be a party to this lawsuit?

~David Henson | Citizen Octopus

About the Author

David Henson is an inventor, publisher, writer and founder of Citizen Octopus, a site focused on analyzing systems, incentives, and how information shapes perception.